Understanding the Rise of AI Deepfakes in Cybersecurity

AI deepfakes have evolved from novelty videos into sophisticated tools for cybercriminals. In 2026, these synthetic media creations pose serious risks to data privacy and identity verification systems. Deepfake technology uses generative adversarial networks to create realistic audio and video forgeries that mimic real people with alarming accuracy.

Attackers leverage deepfakes primarily for phishing, social engineering, and financial fraud. For example, a deepfake video call can impersonate a CEO authorizing wire transfers, bypassing traditional verification methods. Businesses and individuals must recognize these threats to implement proactive defenses.

How Deepfakes Enable Modern Cyber Attacks

Deepfakes amplify classic attack vectors. In phishing campaigns, forged video messages from trusted contacts trick victims into revealing credentials. Social engineering attacks use deepfake audio to impersonate family members in distress, extracting sensitive information or funds.

Real-world 2026 incidents include a reported case where a financial firm lost millions after a deepfake video call convinced staff to approve fraudulent transactions. Another involved identity theft during remote hiring processes, where candidates used deepfakes to pass video interviews. These examples highlight the urgency for robust countermeasures.

Key Detection Methods for AI Deepfakes

Effective defense begins with reliable detection. Forensic analysis tools examine pixel inconsistencies, lighting mismatches, and audio artifacts invisible to the human eye. Behavioral biometrics add another layer by analyzing micro-expressions, speech patterns, and eye movements during live interactions.

Popular approaches include:

- AI-powered detection software that flags unnatural blinking or lip-sync errors

- Blockchain-based verification for authenticating video sources

- Multi-spectral analysis checking for digital manipulation signatures

Step-by-Step Implementation of Multi-Factor Verification

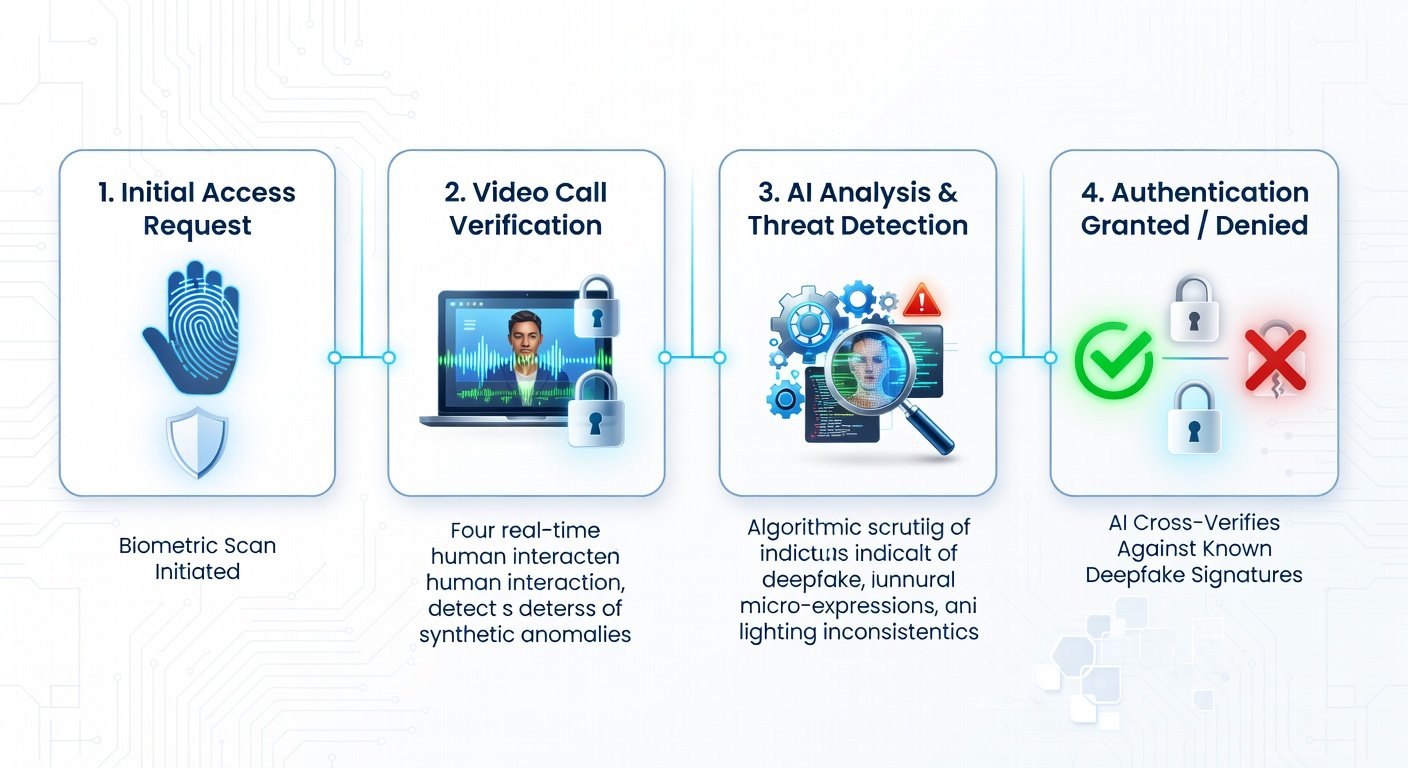

Organizations should adopt layered verification protocols. Start by combining knowledge-based factors with possession-based ones, then integrate inherence-based biometrics. For video calls, require secondary confirmation via a separate channel, such as a pre-registered phone number or app notification.

Practical steps include:

- Enable liveness detection in all video authentication systems

- Train employees to request real-time actions like specific gestures during calls

- Deploy enterprise-grade AI detection platforms alongside existing security suites

- Regularly audit and update verification policies based on emerging threats

Comparing Free vs Paid Deepfake Detection Tools

Free tools offer basic analysis suitable for individuals but often lack real-time monitoring and enterprise support. Paid solutions provide advanced forensic capabilities, continuous updates, and integration with business workflows. Choose based on your risk profile: personal users may start with open-source options, while companies benefit from subscription services with dedicated threat intelligence.

Actionable Security Tips for 2026

Both individuals and businesses can reduce exposure. Use strong, unique passwords with password managers, enable multi-factor authentication everywhere, and verify suspicious requests through known contact methods. Stay informed via resources from CISA and NIST. Conduct regular security awareness training focused on deepfake red flags.

FAQ: Spotting Deepfakes in Video Calls

How can I tell if a video call is a deepfake? Watch for unnatural eye movements, delayed audio responses, or background inconsistencies. Request a spontaneous action like holding up a specific object.

Are there reliable apps to detect deepfakes? Yes, several AI tools analyze streams in real time for manipulation indicators.

What should businesses do after a suspected incident? Immediately isolate affected systems, report to authorities, and review all recent transactions.

Conclusion

AI deepfakes represent an escalating cybersecurity challenge in 2026, but layered defenses make them manageable. By combining detection technologies, rigorous verification processes, and ongoing education, users can protect their identities and data effectively. Stay vigilant and adapt your strategies as threats evolve.

No comments yet. Be the first!