Why Ethical AI in Machine Learning Matters in 2026

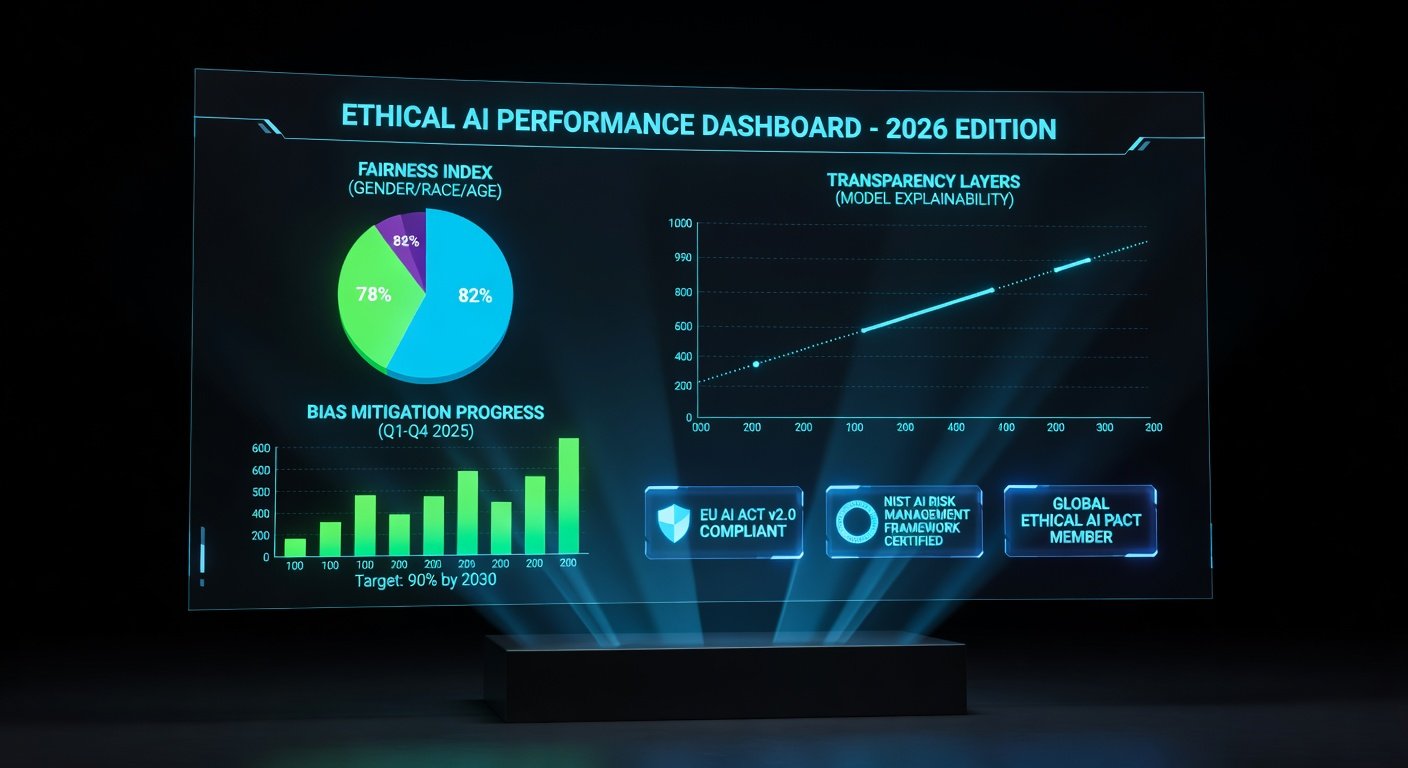

In 2026, the landscape of artificial intelligence has evolved dramatically, driven by stringent global regulations like the EU AI Act and NIST's AI Risk Management Framework. Companies deploying machine learning (ML) models face unprecedented scrutiny over bias, transparency, and fairness. Ethical AI isn't just a buzzword—it's a necessity to avoid fines, reputational damage, and legal battles. This guide provides a comprehensive how-to for selecting and implementing ethical AI tools, ensuring your ML projects comply with emerging standards while delivering robust, equitable outcomes.

From healthcare diagnostics to hiring algorithms, biased models have caused real-world harm. Consider the 2023 COMPAS recidivism tool controversy, where African American defendants received disproportionately harsh risk scores. Fast-forward to 2026: regulators demand auditable, fair systems. By integrating ethical tools early, you can build trust, improve model performance, and future-proof your AI initiatives.

The 2026 Regulatory Landscape: What You Need to Know

By 2026, ethical AI compliance is non-negotiable. Key regulations include:

- EU AI Act: Classifies AI systems by risk level, mandating transparency for high-risk ML applications like biometric identification.

- US Executive Order on AI: Emphasizes bias mitigation and equitable AI development.

- Global Standards: OECD AI Principles and NIST AI RMF provide frameworks for trustworthy AI.

Non-compliance can result in penalties up to 6% of global revenue under the EU Act. Ethical tools help automate compliance, from bias audits to explainability reports.

Core Principles of Ethical AI in ML

Focus on three pillars:

- Bias Mitigation: Identify and reduce disparities in training data and predictions across demographics.

- Transparency: Make model decisions interpretable to stakeholders.

- Fairness: Ensure equitable outcomes, measured by metrics like demographic parity or equalized odds.

Tools aligned with these principles integrate seamlessly into ML pipelines, supporting frameworks like TensorFlow, PyTorch, and Scikit-learn.

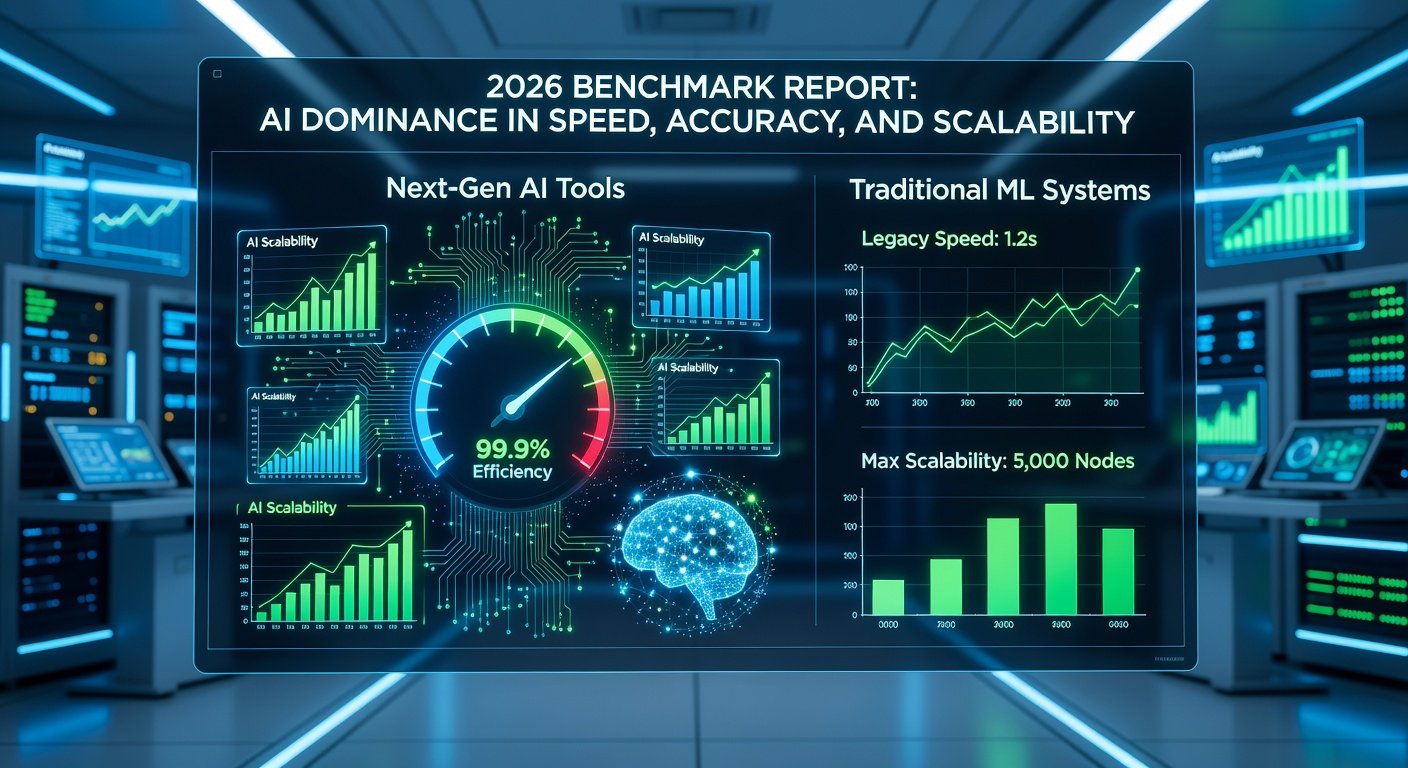

Top Ethical AI Tools for ML in 2026

Here's a curated list of battle-tested open-source tools:

- AI Fairness 360 (AIF360): IBM's toolkit for detecting and mitigating bias. Supports 70+ metrics and nine debiasing algorithms.

- Fairlearn: Microsoft's library for assessing and improving fairness in ML models. Integrates with Azure ML.

- What-If Tool: Google's TensorFlow extension for interactive model analysis, visualizing bias and counterfactuals.

- MLflow with Ethics Extensions: Tracks experiments with built-in fairness logging.

- Alibi: Focuses on explainability with techniques like SHAP and LIME.

For enterprise needs, commercial options like Google's Responsible AI Toolkit or H2O.ai's Driverless AI offer scalable solutions with audit trails.

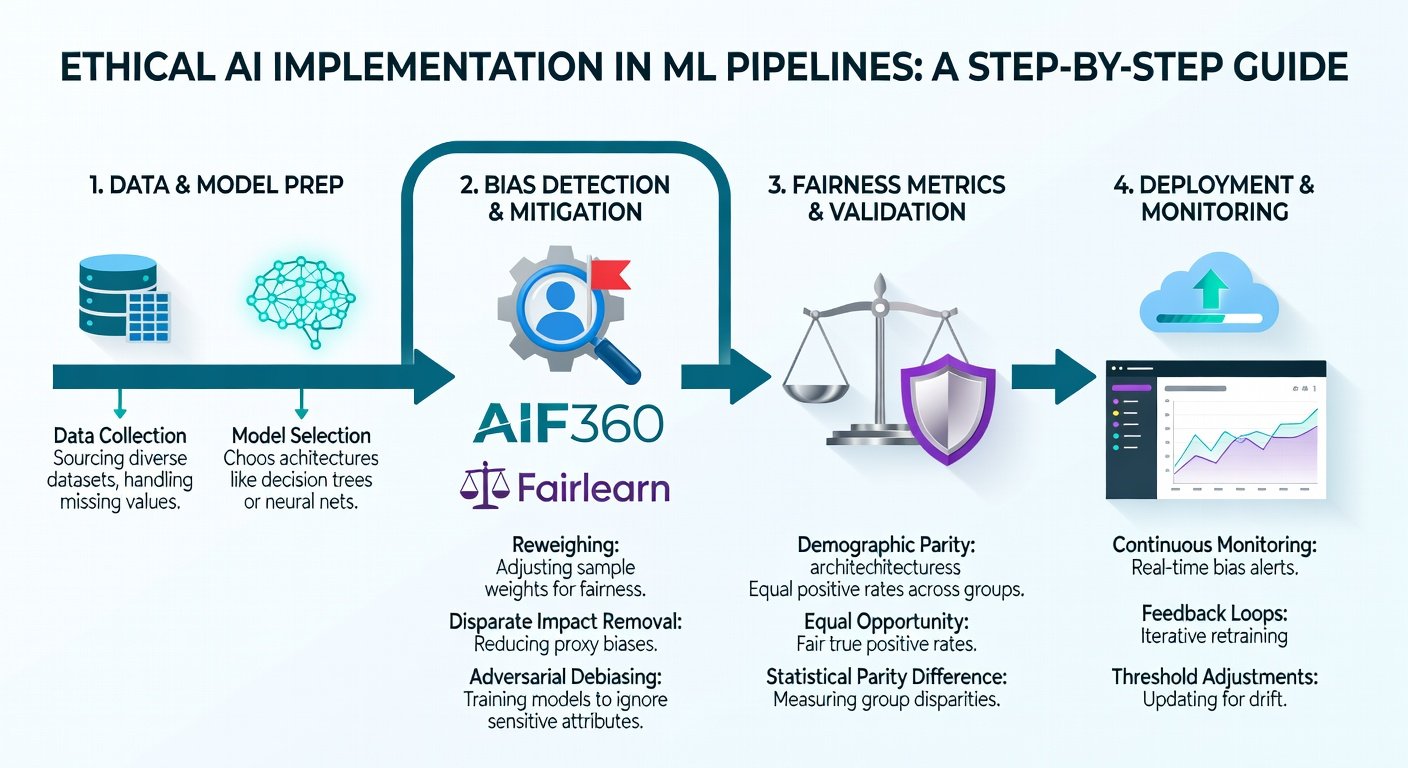

Step-by-Step Guide: Selecting Ethical AI Tools

Step 1: Assess Your ML Pipeline

Map your workflow: data collection, preprocessing, training, deployment. Identify high-risk stages, like feature selection where proxy variables (e.g., ZIP code for race) introduce bias.

Step 2: Define Fairness Metrics

Choose metrics based on use case:

- Demographic Parity: Equal positive rates across groups.

- Equal Opportunity: Equal true positive rates.

- Calibration: Predicted probabilities match actual outcomes per group.

Use tools like Fairlearn's MetricFrame to baseline your model.

Step 3: Evaluate Tools

Create a comparison matrix:

| Tool | Bias Detection | Mitigation | Explainability | Integration Ease |

|---|---|---|---|---|

| AIF360 | Excellent | Excellent | Good | Medium (Python) |

| Fairlearn | Excellent | Good | Good | High |

| What-If Tool | Good | Limited | Excellent | High (TF.js) |

Test on a sample dataset from Fairlearn's datasets.

Step 4: Pilot Integration

Start small: Retrofit an existing model. For AIF360:

from aif360.datasets import BinaryLabelDataset

from aif360.algorithms.preprocessing import Reweighing

# Load dataset, apply reweighing for bias correctionImplementing Ethical AI: Hands-On Instructions

Bias Mitigation Workflow

- Preprocess Data: Use AIF360's

ReweighingorLFR(Learning Fair Representations) to balance datasets. - Train with Constraints: Integrate Fairlearn's

ExponentiatedGradientreducer during optimization. - Post-Processing: Apply thresholds to equalize outcomes without retraining.

- Validate: Run disparity metrics; aim for <10% group imbalance.

Ensuring Transparency and Explainability

Leverage SHAP for feature importance:

import shap

explainer = shap.Explainer(model)

shap_values = explainer(X_test)Generate reports for regulatory submission, including counterfactual examples via What-If Tool.

Deployment Best Practices

- Automate monitoring with MLflow: Log fairness metrics per inference batch.

- Human-in-the-Loop: Flag high-risk predictions for review.

- Version Control: Track model iterations with ethical audits.

Real-World Examples: Successes and Pitfalls

Success: Healthcare AI at Mayo Clinic

In 2025, Mayo Clinic used Fairlearn to debias a pneumonia prediction model, reducing racial disparities by 25% while maintaining 92% accuracy. This aligned with HIPAA and boosted patient trust.

Pitfall: Amazon's Hiring Tool (Lessons Learned)

Amazon scrapped a 2018 recruiting AI after it discriminated against women due to male-biased training data. Post-mortem: Lack of early bias checks. In 2026, similar failures could trigger class-action suits.

Success: Google's PaLM 2 Ethics Integration

Using internal tools akin to What-If, Google ensured multilingual fairness, as detailed in their transparency reports.

Another example: Financial services firm using AIF360 mitigated loan denial bias, complying with OECD guidelines and increasing approvals for underrepresented groups by 15%.

Common Mistakes to Avoid

- Over-Reliance on One Metric: Fairness is multi-dimensional; use a suite.

- Ignoring Domain Expertise: Involve ethicists and end-users.

- Neglecting Continuous Monitoring: Bias drifts post-deployment.

- Tool Silos: Ensure end-to-end pipeline integration.

Future-Proofing Your ML Strategy for 2026 and Beyond

Invest in team training via certifications like Certified Ethical Emergent Technology (CEET). Foster a culture of responsible AI with cross-functional ethics boards. As quantum ML emerges, ethical tools will evolve—stay agile by subscribing to updates from AIF360 and Fairlearn communities.

Conclusion

Ethical AI tools empower you to harness ML's power responsibly in 2026. By following this guide—assessing needs, selecting tools, implementing workflows, and learning from examples—you'll build fair, transparent models that stand regulatory muster. Start today: audit one model and iterate. The future of AI is ethical, equitable, and yours to shape.

No comments yet. Be the first!